While every major platform company is racing to build larger AI models, deploy more GPUs, and construct massive data centers designed to power the next generation of software experiences, Apple is largely staying out of the race and partnering in place of building.

At the same time, public sentiment toward AI is devolving. Excitement about productivity and creativity is increasingly mixed with concern about surveillance, data ownership, synthetic media, and the commercialization of personal information. The more AI becomes embedded in daily life, the more trust becomes the critical currency.

It also feels as if we are on the precipice of fundamental change in human society. With the "intelligence" portion of AI growing so fast, it seems plausible that the world will look like a completely differnt place within the next 12-18 months. This feeling creates massive uncertainty that has the markets and the world on edge.

Against this backdrop, Apple appears to be taking a fundamentally different approach than its peers. Preserving capital, partnering where appropriate, and continuing to quietly build Apple Silicon to power AI compute - locally.

At one point, Apple was widely criticized for it's silence amidst the AI race. Then they had false starts with the launch of Apple Intelligence and Siri 2.0. In January, they announced a deal with Google to have Gemini power the next version of Siri. It's this last bit that gives me hope for Apple's strategy. More to come on that.

Apple’s Contrarian Strategy: Spend Less, Integrate More

Apple’s projected capital expenditures for 2026 are undisclosed but not expected to sharply increase. On the surface, it could be interpreted as Apple underinvesting in AI.

But Apple’s history suggests something different. The company often enters markets later, but with tighter integration and better user experience.

Instead of building massive cloud AI infrastructure, Apple is investing in three areas:

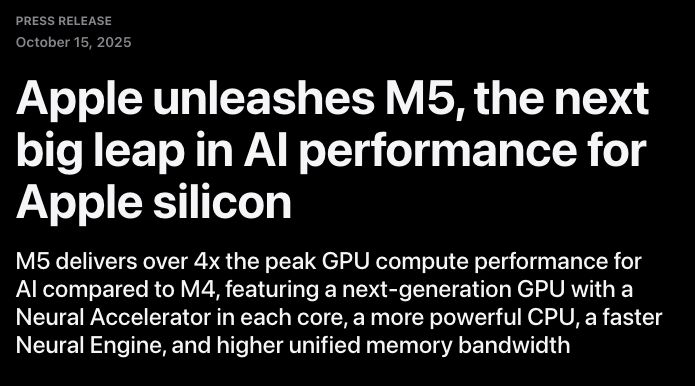

Custom silicon (M-series, A-series, Neural Engines)

On-device machine learning

Ecosystem-wide deployment across billions of devices

Apple is betting that the future of AI is decentralized and localized. And, it's powered by Apple Silicon.

Apple’s most underappreciated AI asset is its silicon program.

Unlike most competitors, Apple designs (TSMC produces):

CPU architecture

GPU architecture

Neural processing units

Memory architecture

OS-level ML acceleration frameworks

This allows Apple to optimize AI workloads vertically across hardware and software.

The M-series chips are particularly important. They combine:

High-performance CPU cores

Integrated GPUs

Dedicated neural processing hardware

Unified memory architecture

This matters enormously for AI because memory bandwidth and latency are often bigger bottlenecks than raw compute.

Local Models Are Already Fast Enough

The shift toward on-device AI is not theoretical anymore; I've tried it and it works.

Ever since streamr.ai was acquired, I had been in a bit of tech limbo. I didn't have the focus or motivation to do deep learning in one space. I wanted to do everything and that put me in a place where I was doing almost nothing. I dabbled in small projects here and there. I looked at the hype surrounding OpenClaw, but the token usage was so high that it ended up not being worth the cost.

I started running inference on my MacBook Pro M1 Max (32GB memory) locally running LM Studio and the performance was reasonable. I then tested on an M4 with 48GB memory and I was blown away by the response time. Coincidentally, the model running on the M4 was Google's Gemma. This is when the lightbulb went off in my head. Apple is brilliantly positioned to leapfrog the consumer AI experience.

Here's the future:

Apple partners with AI companies that are willing to let Apple fine tune their models to be highly performant on Apple hardware

These models become available for purchase in the app store

Users can pull these models into their apps of choice (LM Studio, ollama, Cursor, etc.)

This changes the entire conversation around AI architecture. It also changes the conversation around AI trust.

Trust Will Be the Primary Differentiator

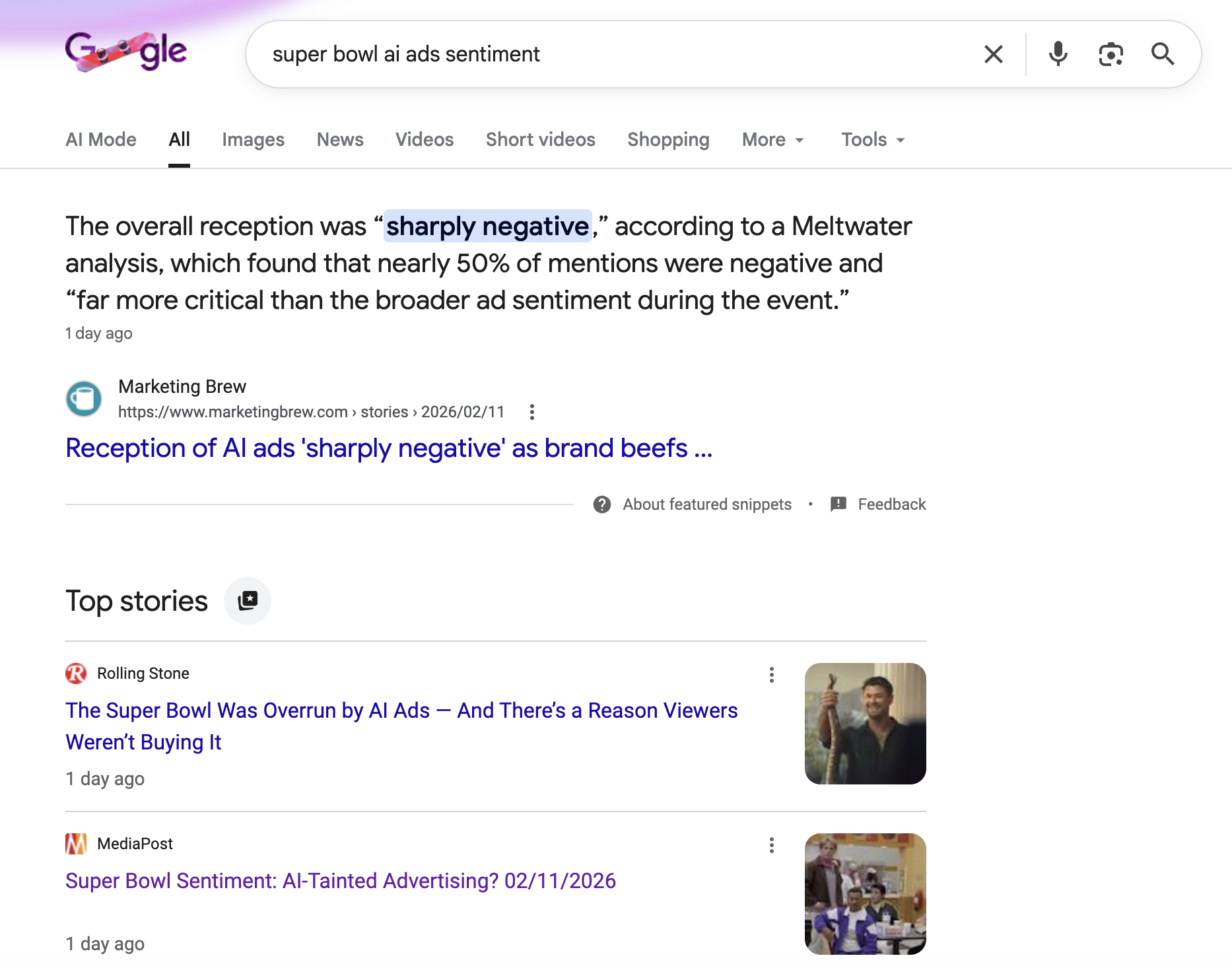

The Super Bowl was the tipping point for AI overload:

ChatGPT + Ads = $#%!

ChatGPT, in many cases, has incredibly personal information about it's users. Although they claim they will not share any personal information from chats with advertisers, we know that the temptation will always exist.

Having been in advertising, I know that data is king. In fact, ChatGPT would be missing a massive opportunity if it were to NOT use the gold mine of data it has to build the most effective ad platform in the world. At some point, the billions of dollars of VC funding is going to dry up and the burden will fall on consumers to foot the compute bill. Enter advertising.

For Apple, NOW is the time to capitalize on the consumer sentiment weakness that OpenAI is experiencing.

Apple already has something competitors cannot easily replicate: a massive installed base of premium devices with Apple Silicon ready to power AI compute.

If Apple deploys advanced on-device models across it's devices, it could instantly become one of the largest AI deployment platforms in the world.

No additional cloud capacity required.

Why This Strategy Could Compound Over Time

Apple’s approach benefits from compounding advantages:

Hardware cycles improve AI automatically

Every new chip generation improves local AI capability.

Privacy narrative strengthens brand trust

If competitors face data controversies, Apple’s positioning improves.

Developer ecosystem locks in

On-device AI APIs could create a new generation of local-first applications. We already see LM Studio using this to it's advantage. Simply load a model, click a button and this model becomes available to the local network using standard OpenAI API endpoints.

Cloud dependency decreases

As models become more efficient, local-first architectures become more viable. Cloud remains important for training, etnerprise, etc., but for consumer inference, local will win.

Apple is positioned to own the device layer of AI.

What Apple Must Do

Apple does not need to hit a home run here. Deeply integrating AI into the OS is something that will happen organically over time. For now, Apple needs to take the existing user experience and make it feel private and wonderful.

Steve Jobs understood that Apple’s role was not to create every piece of software, but to create the medium through which others could create. The App Store became that medium: a distribution, monetization, and trust layer that unlocked an entire creative economy on top of Apple hardware. Apple may now be in a similar position with AI. Rather than trying to build every model or application itself, the opportunity is to build the platform that enables an ecosystem of AI experiences.

How Do They Get There

Apple's fastest path is to acquire a company like LM Studio, solidify launch partnerships with additional model providers, create a Hugging Face pipeline for submitting models that are fine tuned for Apple Silicon (MLX), and launch it as a cohesive product that competes with ChatGPT, Claude, etc. Call it AI Studio.

If public sentiment towards AI continues to erode, Apple will emerge as one of the dominant forces of consumer AI.

Not because it spent the most money.

Not because it trained the largest model.

But because it delivered AI people actually trust.

And in the long run, trust might be the most valuable infrastructure of all.